Why Schema-First Design Is the Secret Weapon for Today’s LLM Builders

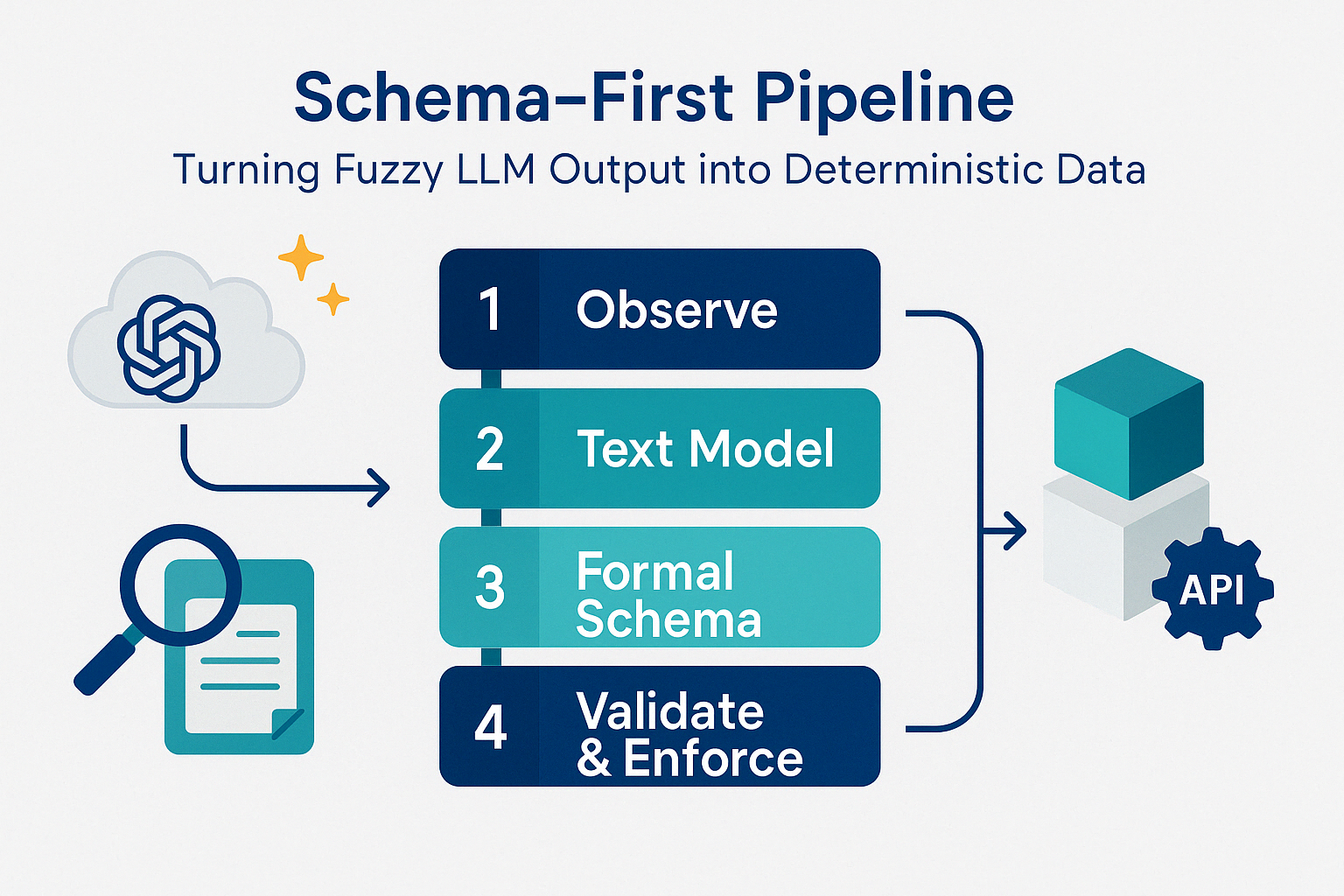

Large Language Models (LLMs) excel at generating human-like text, but their probabilistic nature can be a liability in production systems that demand precision and reliability. Whether you’re extracting data from receipts, powering a payment system, or managing state in a chat agent, you need structured, trustworthy outputs—not “probably correct” strings. This is where a schema-first approach, combining thoughtful domain modeling with tools like Pydantic, transforms fuzzy LLM outputs into deterministic, production-ready data. In this post, we’ll walk through this process using receipt data extraction as a case study, showing how to ensure your LLM-powered applications are both innovative and dependable.

The Challenge: Probabilistic Outputs Meet Deterministic Needs

LLMs predict the next token with some probability. That stochastic magic is perfect for ideation, summarisation, or answering fuzzy human questions. But it clashes with systems that expect hard constraints. This leads to issues like:

- Inconsistent formats:

"deliveryTime"vs"delivery_time". - Type mismatches:

"42"instead of42. - Malformed data: Partial JSON, stray comments, or unnormalized units (

"€29,99"vsDecimal("29.99")).

For casual use, these quirks are tolerable. But in production—think APIs, databases, or compliance workflows—they’re potential points of failure. A schema-first approach addresses this by enforcing a contract between the LLM and your system, ensuring only valid, structured data gets through.

Step 1: Domain Modeling—The Foundation of Reliable Schemas

Before writing a single line of code, you need to understand the domain you’re working with. Skipping this step often leads to schemas that miss critical fields or fail to handle real-world variability. Let’s take receipt data extraction as an example.

Observe the Real World

Start by collecting diverse examples—say, 20–30 receipts from paper scans, emailed PDFs, or photos. Note what appears consistently and what varies:

- Merchant name and address

- Transaction date and time

- Currency and totals (subtotal, tax, grand total)

- Line items (description, quantity, price)

- Payment method (cash, card, etc.)

- Optional extras: tax IDs, loyalty numbers, tips

Build a Conceptual Text Model

Next, describe the domain in plain language, distinguishing required from optional elements and identifying relationships. Here’s a conceptual model for receipts:

- A Receipt contains:

- Merchant: name (required), address (optional)

- Transaction: date (required), time (optional)

- Currency: ISO code (required, e.g.,

"USD") - Totals: subtotal (required), tax (optional), grand total (required)

- Payment: method (required, e.g.,

"CASH"or"CARD"), card last 4 digits (if card) - Items: list of line items (optional), each with description, quantity, unit price, and total

- Optional: tax ID, loyalty number, tip amount

This text model acts as a blueprint, ensuring your schema reflects reality—not assumptions.

Step 2: Translating the Model into a Formal Schema with Pydantic

With the domain understood, we can define a formal schema using Pydantic, a Python library that provides type-safe data validation. Here’s how our receipt model might look:

from pydantic import BaseModel, Field

from decimal import Decimal

from datetime import date

from enum import Enum

class PaymentMethod(str, Enum):

CASH = "CASH"

CARD = "CARD"

OTHER = "OTHER"

class LineItem(BaseModel, strict=True):

description: str

quantity: Decimal = Field(gt=0, description="Number of items")

unit_price: Decimal = Field(gt=0, description="Price per unit")

line_total: Decimal = Field(gt=0, description="Total for this line")

class Receipt(BaseModel, strict=True):

merchant_name: str = Field(..., description="Name of the merchant")

merchant_address: str | None = None

date: date = Field(..., description="Transaction date")

time: str | None = None

currency: str = Field(pattern="^[A-Z]{3}$", description="ISO 4217 currency code")

subtotal: Decimal = Field(..., description="Total before tax")

tax: Decimal | None = None

grand_total: Decimal = Field(..., description="Final total including tax")

payment_method: PaymentMethod

card_last4: str | None = Field(None, pattern="^[0-9]{4}$", description="Last 4 digits if card payment")

items: list[LineItem] = []

tax_id: str | None = None

loyalty_number: str | None = None

Key Features

- Type Safety: Fields like

grand_totalareDecimal, not strings. - Constraints:

currencymust match a three-letter ISO code. - Optional Fields:

taxandmerchant_addresscan beNone. - Descriptions:

Fieldmetadata guides the LLM and developers.

You can also add custom validators, like ensuring grand_total = subtotal + tax, to enforce business rules directly in the schema.

Step 3: Integrating the Schema with LLMs

To make the LLM produce structured data, provide the schema as a tool signature (e.g., via JSON schema) and validate its output. Here’s the workflow:

- Generate the Schema:

schema = Receipt.model_json_schema() # Pass to LLM as tool parameters - Call the LLM: Feed it an instruction like “Extract receipt data” along with the schema.

- Validate the Output:

try: receipt = Receipt.model_validate(llm_response) # Use the validated receipt object except ValidationError as e: # Handle errors (e.g., retry or log)

This ensures the LLM’s output conforms to your schema, turning probabilistic text into a reliable Python object.

Benefits of This Approach

- Consistency: Every receipt object meets the same standard.

- Error Detection: Validation catches issues early, before they hit downstream systems.

- Maintainability: Update the schema in one place, and the contract evolves.

- LLM Guidance: The schema’s structure and descriptions improve the model’s accuracy.

Best Practices

- Use

strict=Trueto prevent unwanted type coercion. - Add

descriptionto fields to steer the LLM. - Handle validation errors gracefully—retry with the LLM or escalate for review.

Measuring Schema Health in Prod

To maintain a robust schema-first approach in a production environment, instrumenting key metrics is essential for monitoring the health of your LLM-powered system. The validation-fail rate tracks drifts in prompts or the introduction of new document types, with alerts triggered if it sustains above 5%. The average repair attempts metric assesses LLM adherence quality, where a mean exceeding 1.5 indicates potential issues. Field completeness measures the usefulness of optional fields, flagging drops greater than 20 percentage points. Finally, latency with retries monitors the cost of self-healing mechanisms, ensuring the SLA remains below a p95 of 800ms. By integrating these metrics into tools like Prometheus and Grafana, you can visualize trends, iterate on prompts and validators, and ensure your system remains reliable and efficient as it scales.

Below is a detailed breakdown of these key metrics:

| Metric | What it tells you | Alert Threshold |

|---|---|---|

| Validation-fail rate | Drift in prompt or new document types | > 5% sustained |

| Avg. repair attempts | LLM adherence quality | > 1.5 mean |

| Field completeness | Which optional fields are useful | Drop > 20 points |

| Latency with retries | Cost of self-healing | SLA > p95 800 ms |

Hook these metrics into Prometheus/Grafana to monitor system health and iterate on prompts and validators accordingly.

Conclusion: Building Robust LLM Applications

A schema-first approach, rooted in careful domain modeling, bridges the gap between LLMs’ creative chaos and production systems’ need for certainty. By starting with a conceptual text model, translating it into a Pydantic schema, and validating LLM outputs, you create a pipeline that’s both flexible and reliable. Whether you’re extracting receipt data, managing chat agents, or enriching RAG metadata, this method ensures your application can scale from prototype to production with confidence.